Projects

This is a list of projects I've done that I think are quite notable. Scroll down or Ctrl + F (or Cmd + F if you're using a Macbook) if you want to look up the things I've made by keyword. You'll get the idea that I'm a big robotics and machine learning guy.

Augmented Reality

Surgent

Surgent is a project I made at a Hackathon (MHacks 2025) with an amazing team that I hope to continue improving on when the 2026 Snap Specs (smart glasses) are commercially available.

We made an AR Surgical training platform with biometrics and an AI agent coach (Gemini Live) providing live feedback. Even after you complete the AR training, you get a summary feedback via email where you can ask it followup questions for interactive feedback beyond your training via the AgentMail API. (They're a startup that's basically ChatGPT but on email).

You might be wondering why we made this. Well while we have plenty of surgeons in the United States, 5 Billion people worldwide do not have access to surgical care, which results in 18 Million deaths every year because of a shortage of surgeons according to the WHO. 18 million families, friends, and communities at the mournful mercy of something that can be prevented with more educated surgeons. So we followed this bloody trail asking why doesn’t the world have more surgeons? Well, that’s because surgical training is limited. There's so many non-trivial barriers into getting surgical training. We're talking about expensive equipment, shortage of mentorship, and no one with a wound volunteering to be practice on.

While some nations are progressing fast in health, some are left in the dust. That's why we made Surgent, Snapchat's first Augmented Reality Surgical Training System with Biometrics and AI surgery Agent Consultation.

Robotics and Embedded Systems

mARVin (autonomous robotic vehicle)

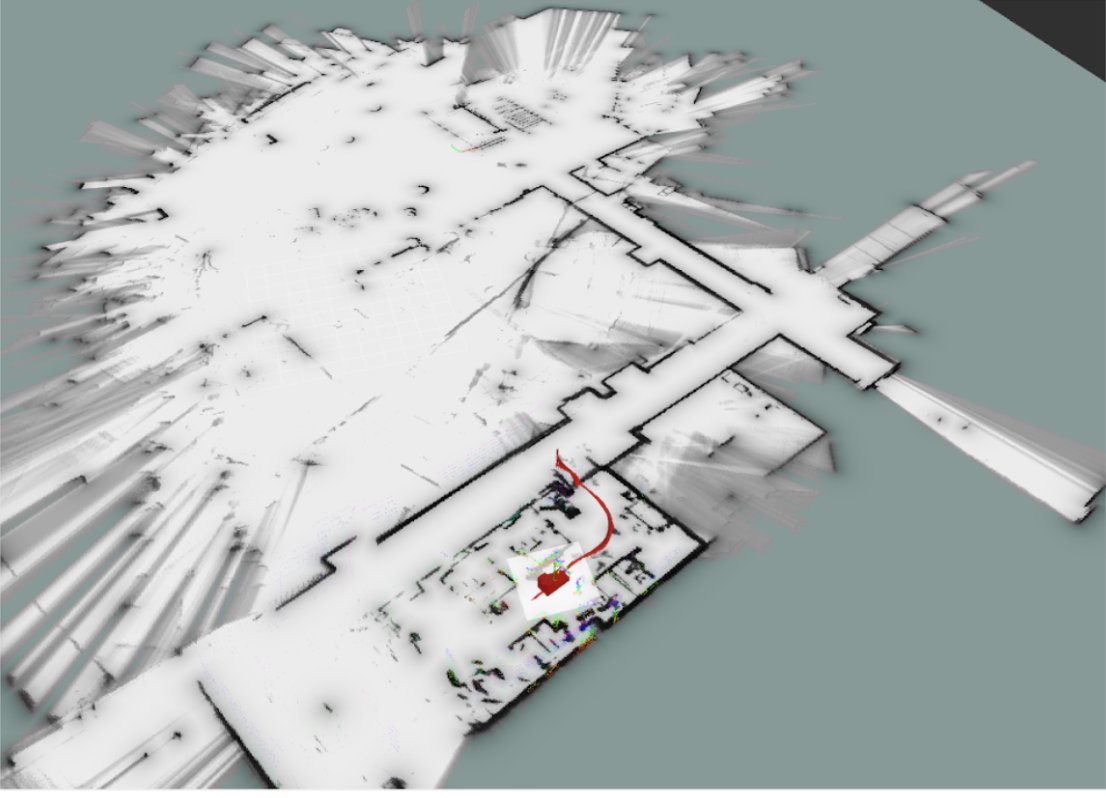

This is mARVin, our autonomous robotic vehicle that we've been working on in the University of Michigan Autonomous Robotic Vehicle, an engineering student organization. I've worked on the integration of sensors for mARVin and used them for SLAM (Simultaneous Localization and Mapping) algorithms in ROS2. We used sensors like IMUs, wheel encoders, stereo cameras, and LiDAR.

This was my favorite thing I've done in college. You can ask me if you're curious about the details.

Web Robot Simulator and Interface

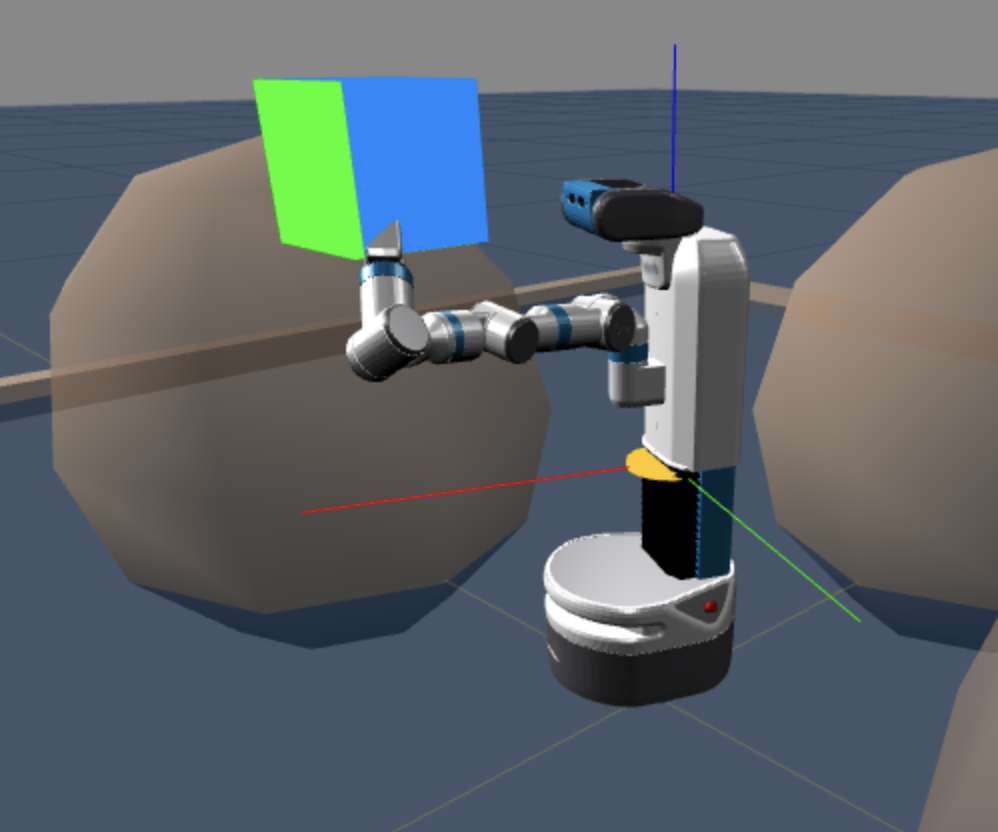

This is a web-based robot simulator for path planning, forward kinematics, inverse kinematics, PID, and more using Three.js. It also has a feature where you can render robots by making a JSON file that is structured in a similar way to a URDF file. (It's like an XML file but for robotics that define geometries and joints of robots). I also implemented a feature that allows the software to interface with the Fetch Robot via rosbridge. I want to thank Professor Chad Jenkins for fulfilling one of my goals I had since high school (to write code that works on the actual fetch robot, the first lab robot I've seen in-person).

This is an extension of the robot simulator where it does path-planning via RRT (Rapidly exploring random trees). The reason why I chose to do RRT over A* is because while A* can produce a more optimal path, it's quite computationally expensive due to how exhaustive it is, so it can be slow in large/complex/higher dimensions like this case where the search space is in 3D. Path-planning via RRT could be faster due to its nature of being a sampling-based algorithm that searches through nonconvex, high-dimensional spaces such as the Three.js scene as shown above in the video.

stacking blocks with a Kuka LBR iiwa

point cloud ICP

Iterative Closest Point (ICP) is an perception algorithm where you do pose estimation of an object in a scene given a model. It does this by minimizing the distance between the point clouds from the original model and the detected object that corresponds to the model in the scene. By fitting the model, we know the orientation (the pose) of the object. I made the visualization with Open3D. I plan on explaining the math and how I optimized the code in detail (via a certain data structure and design decision) in a blog post soon.

Biped Robot Locomotion

I was learning locomotion with biped robots through this collection of projects. I want to thank Professor Jessy Grizzle and Dr. Wami Ogunbi for mentoring me as a research assistant. I have been working on this until Wami completed her PhD. I learned optimal control things like Model Predictive Control (MPC) as well as other mathematical concepts (e.g. Lie Algebra, Manifolds, etc.) and control concepts (e.g. feedback linearization, PID, etc.) and how they're applied to robotic control as well as a bit of reinforcement learning (more of a self-studied thing) via Deep Deterministic Policy Gradients. I did this to1.) Audit the "Biped Bootcamp" a paper by Dr. Wami Ogunbi, a paper now incorporated into the University of Michigan’s Robotics Department curriculum for undergraduate instruction

2.) Have enough background to work on some sort of reinforcement learning for bipedal robots as a side project

3.) Make a robust algorithm for the robot to walk on non-stationary or inclined surfaces. Here's the simulation of the robot walking on inclines and stairs:

Computer Graphics, Animation, Simulations

basically anything that's relevant to graphics and simulations. That includes anything where I use numerical analysis concepts too.

Numerical Methods concepts applied to PID control

I made a simple PID pendulum simulator using various type of numerical analysis algorithms including Euler, Verlet, Velocity Verlet, and Runge–Kutta (4th-Order) to approximate the integration (the I in PID) term.

It's made to be stable at the x_desired point.

Linear Complementarity Problem Simulation

I simulated collision and bouncing of a box by rewriting the constraints and the dynamics for contact resolution as a linear complementarity problem (LCP).

I know the Bullet and NVIDIA PhysX physics engines uses LCP whereas the NVIDIA Isaac Sim uses some sort of penalty based approach to the problem (as of when I wrote this). By changing the coefficient of restitude, you can adjust the bounciness of the box.

I can also explain the math that makes this work if enough people request it.